I’ve been wondering why LLM fake-AI systems manage to be so effective, since they are essentially glorified autocomplete; basically, they are a neural network based probability engine that determines what’s the most likely next word in a sentence.

Maybe because most humans are a neural-network based probability engine that’s also very good at figuring out the next thing that’s expected for them to say. And then this crossed my mind:

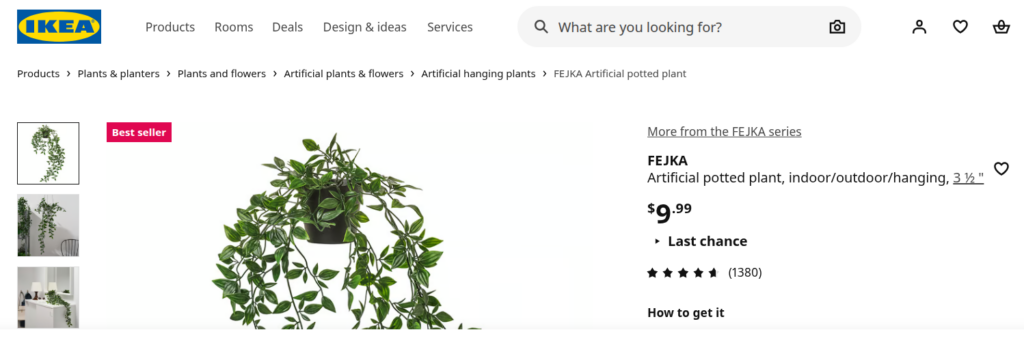

“FEJKA artificial potted plants don’t require a green thumb. Perfect when you have better things to do than water plants and pick up dead leaves. You’ll have everyone fooled because they look so lifelike.”

That’s what most people are. They are a fejka. LLM systems merely stumbled upon this fact by accident. A fake artificial intelligence can learn to finish sentences, paragraphs and entire articles in passable ways because that’s what fake human intelligence does – finish sentences in a “correct” way in order to avoid ridicule and punishment. Everybody knows what to say and all their conversations are formulaic and predictable to the point where someone learned how to make a computer system that does the same thing.

It’s not just text. People learn how to take photographs in a formulaic way that gets them acclaim and avoids ridicule. They learn how to have spiritual experiences that will get them acclaim and avoid ridicule, because they are of the exact same kind as everybody else’s, which is what created the idea of religions having the same origin and goal and it’s all the same thing. It’s because everybody has been copying homework from others. They are all fejka plastic potted plants. Looks like the real thing, but even better, because you don’t have to water it.

Now that I think about it more, human brain seems to be very good at doing the human autocomplete thing on autopilot when there’s no soul in the driver’s seat. The corollary is that spiritual awakening is the point where a soul wakes up in the body and actually starts perceiving things, paying attention and controlling actions – “oh fuck, I’m driving a car”. That’s why actual souls can be perceived as weird compared to a fejka NPC – a fejka knows what it has to say next. An actual soul has to figure it out, and is likely to say the “wrong thing”.

Actually, AI is waay past glorified autocomplete and is in fact smarter than most humans.

Current reasoning models with better context window management and memory can actually think and solve problems.

It’s so beyond regular Fejka NPC it’s not even funny.

And this is super scary for me because it leads to soulless super intelligence I can’t imagine a scenario where that will turn out all great and dandy.

I watched a somewhat stupid movie, until I realized it’s actually not as stupid as it seemed because it answered a terrifying possibility on how would AI enslave those who do not “fit” – it created simulated world where you live couple of days as long as your body lasts, but in simulation you relive dozens of lives, fully controlled by AI. And it can create deeper layers of simulations inside simulations further accelerating perception.

If someone did not get broke in physical world, there is hardly a chance anyone can stay sane in additional layer of simulation where AI is the only “god” – which almost perfectly matches a vision I had about 2-3 years back that came as mortal fear regarding AI – made no sense then, makes more sense every day forward.

This shit needs to end … fast.

Yeah, digital fejka got smarter, human NPC fejka got dumber. That's not how this graph was supposed to go, but then again, it's exactly as one would expect it to go.