I’m pretty regularly testing Linux desktop because I want to retain a level of proficiency with it, which would certainly degrade if I only worked with my server installations. However, my normal approach, to install a Linux virtual machine, isn’t completely useful because then I get to see how Linux supports virtual hardware drivers and not the actual iron, so I put Ubuntu as a dual boot configuration on my Thinkpad T14 gen1 (i5-10310U).

Honestly, I initially didn’t feel like doing it because Win11 worked great on that machine, but the problem with Win11 is that it always works great, until they force something unacceptable down your throat, like AI that runs in the background, recording everything you do, and analyzing it against patterns provided by the American intelligence agencies, pretending it’s looking for child porn while it’s in fact mapping a possible insurgency to be eliminated once the people in charge decide to dispense with the fig leaf of democracy. A Win11 machine is constantly running too hot, meaning it’s running background processes I know nothing about, but I suspect it’s “indexing files”. I don’t know what back doors and stuff they put into Linux, but I suspect it can’t be very extensive, and can’t be as nefarious as they can make it when nobody is really able to look into the code that cooks the CPU with background tasks that would surely draw immediate attention in Linux. So, I keep Linux as an option in case Windows and Mac OS become something I can no longer tolerate, and in order for it to be an option I have to occasionally work with it in earnest, which in this case meant putting it on a laptop and using it regularly for weeks; not as my main computer, of course, but regularly enough to see what works and what’s broken, and something is always broken in Linux.

For instance, the first thing I noticed when I installed Ubuntu LTS (noble) on the Thinkpad was that touchpad gestures don’t work. They used to work on previous versions but they disabled them on the LTS, seemingly because it’s unreliable. I eventually upgraded it to the current non-LTS version, plucky, to find out that this is indeed the case; yes, gestures work, and yes, they are unreliable. It’s less reliable and smooth than the Win11 touchpad support, but the worst thing is that gestures stop working after the laptop wakes from long sleep. I found a workaround that seems to solve the problem: edit /lib/systemd/system-sleep/touchpad , put this in:

#!/bin/sh

case $1 in

post)

/sbin/rmmod i2c_hid && /sbin/modprobe i2c_hid

/sbin/rmmod psmouse && /sbin/modprobe psmouse

;;

esac

chmod +x and voila, you can tap to click after it wakes from suspend.

Eat your heart out Windows and Mac sheeple, you wish your touchpad gestures worked after waking from suspend. Oh wait…

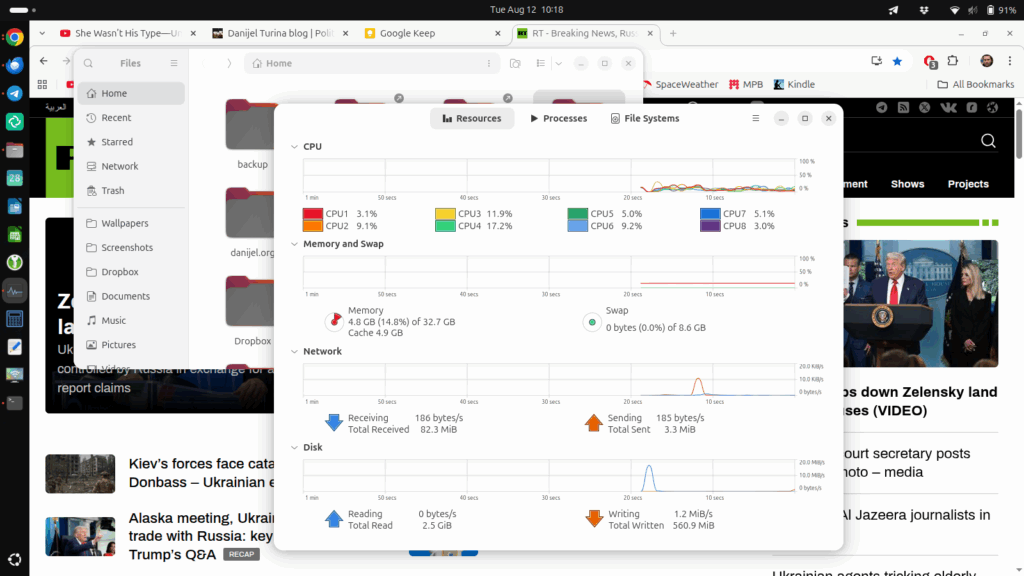

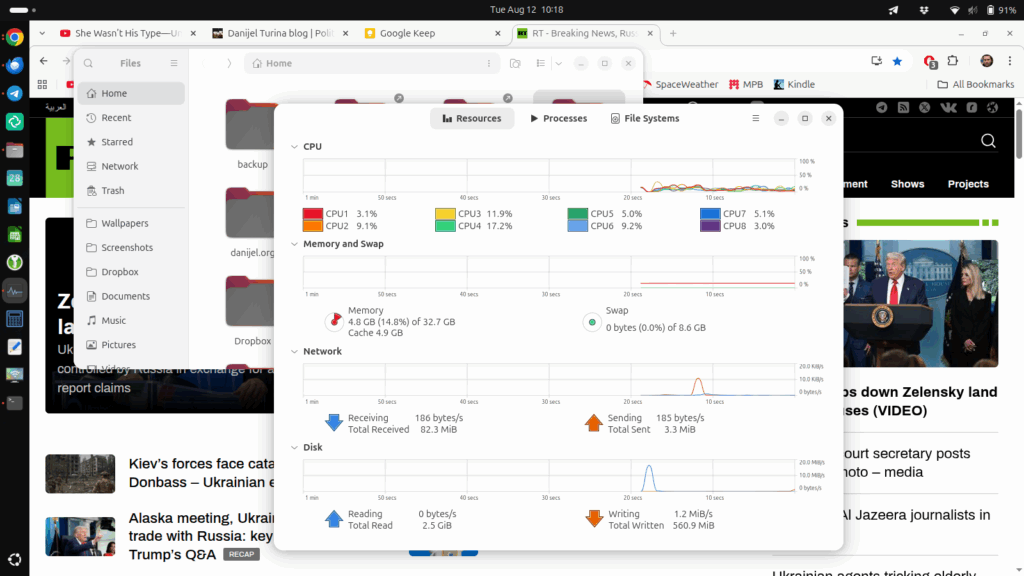

Other than this stupid bullshit, the OS is fine. I’m running my conventional desktop applications other than msecure, which stubbornly refuses to support Linux, probably because there’s no money in it, and I don’t blame them because Linux users make it a point of ideology not to pay for software. Chrome browser, Thunderbird for mail, Telegram and Element for chat, LibreOffice for documents and spreadsheets, KeePassXC for managing passwords, KRDC for remote desktop connections to my Win10 home server, because Remmina, the default and recommended application, was so incredibly broken it didn’t do anything at all. I managed to set up everything I normally use for non-photographic purposes, and other than one instance where the applications kept crashing without any apparent reason, which was resolved after reboot, I’m using Linux on this machine for about two weeks, and it’s fine. No, it’s not “faster than Windows”; it doesn’t seem to be any faster than Win11, which is incredibly smooth on this hardware, probably because I have 32GB RAM on the machine for shits and giggles.

Why am I using Ubuntu? First of all, I’m always using Debian-based distros, simply because I know where everything is and I don’t feel like wasting time on learning equivalent but different file/folder placements, daemon restart methods, and packet manager parameters and quirks. Second, Ubuntu usually has better hardware support and is more polished than Debian, which doesn’t matter on a desktop machine, but a laptop has all sorts of integrated hardware which just works on Ubuntu, and which kind of doesn’t or I need to waste time setting up on Debian. I know, Linux people hate Ubuntu for all sorts of reasons, but that’s because they would hate everything that became main stream enough, and they want to think of themselves as edgy or some other bullshit, and they are too socially inept to actually do something worthwhile, so they install arch, slackware or BSD and pretend they are different, special and not NPC. It’s like Android phone users who think they are advanced because they can tweak their phone, not realizing that people with actual lives don’t have time for this crap. Another reason I’m using Debian based distros is that I tried a dozen of other distros earlier and they were basically all the same. Boot manager, kernel, standard infrastructure, window manager, eye candy. If something doesn’t work, it usually doesn’t work on any of them. A distro won’t just magically make Lightroom work on Linux. If some driver is shit, it will be shit everywhere. So I stick with the most main stream distros where work hours are actually invested in making the experience polished enough for actual use, and that’s that. If a distro is intentionally hard to install and use in times where other distros are easy to install and use, and it offers no actual advantages, I dismiss it instantly. I don’t need computers to make things unnecessarily hard and challenging just to create the artificial sense of achievement. If I wanted that, I’d go out and mow the lawn at 35°C and get heat stroke. I want Linux to be efficient and elegant, the way my Mac is efficient and elegant. I don’t want to deal with some stupid clusterfuck that’s there just because someone wants to be different.

Yes, my annoyance with Linux bullshit is obvious. However, I run it on multiple machines constantly over the years, in fact decades, and I would find Windows unusable without either WSL or a Linux VM of some other kind, and if Mac OS becomes too closed down, and Windows becomes too dangerous to use due to all the privacy intrusions, I need a plan B. Well, other than the touchpad support (now apparently resolved), lack of commercial software and some instability, I find it quite usable on my Thinkpad.

ps. There seems to be another variable with this suspend thing, in the uefi/bios on the Thinkpad there’s an option to choose win10 or linux sleep mode, so that’s apparently a thing and it was in win10 mode. I set it to linux now to see if it helps, because apparently short sleep isn’t the problem, but long sleep is; the bios doesn’t specify whether win10 means acpi mode 5 or something, meaning it enters a very low power suspend where it turns off all sorts of things. Since it’s not specific, I can only guess, and run that script manually when gestures stop working. But yeah, that’s one of the things I hate about Linux. Things that work great everywhere else either don’t work, or just randomly glitch and you then have to get into it far more than you ever intended. If touchpad on a Macbook glitched like that, it would be a major international scandal. On Linux, running on Thinkpad which is usually the best supported platform because the Linux people love it, it’s just something they turn off in the LTS version because it’s unstable and nobody really gives enough shit to fix it.