I was watching some YouTube videos about old computers, thinking: which ones are predecessors of our current machines, and which ones are merely extinct technology, blind alleys that led nowhere?

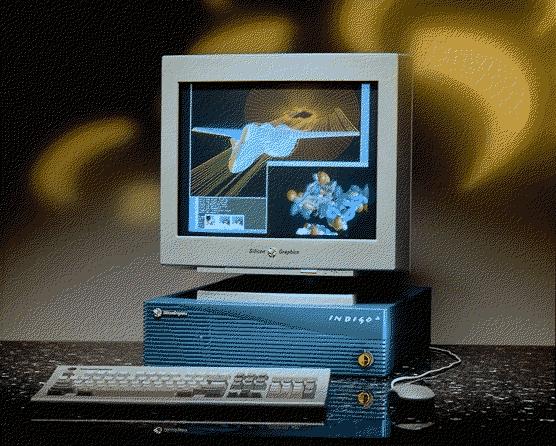

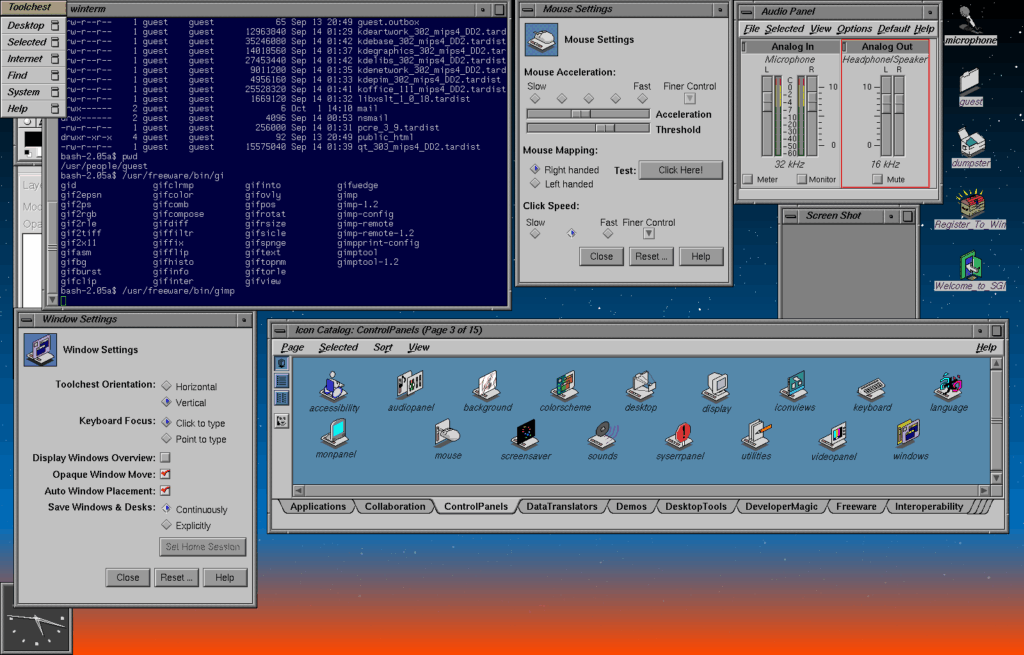

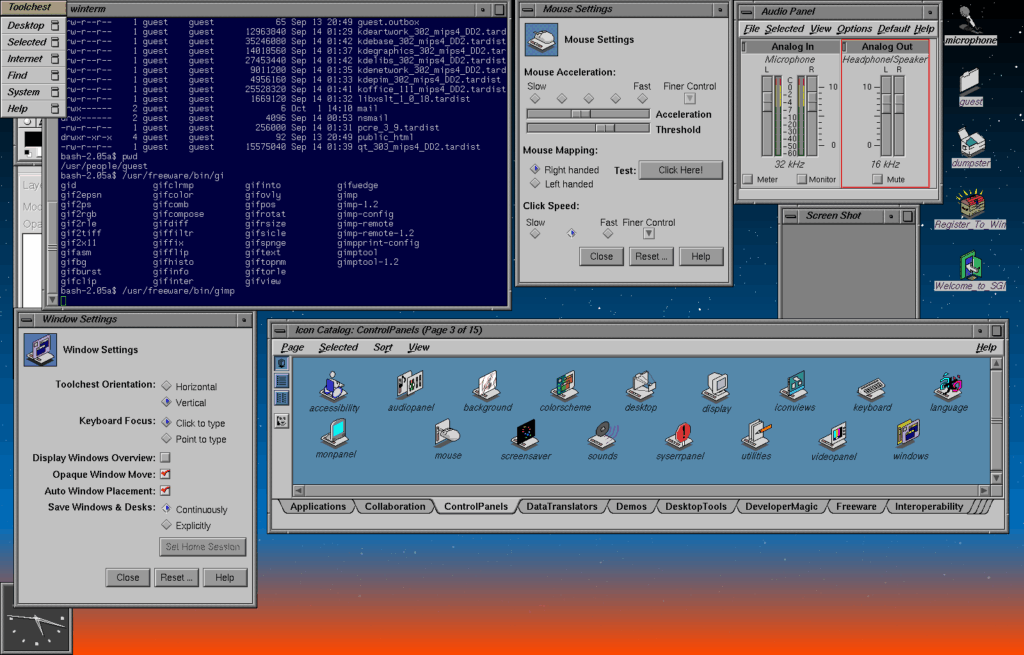

It’s an interesting question. I used to assume that home computers from the 1980s are predecessors of the current machines, but then I saw someone work on an old minicomputer running UNIX, PDP-10 or something, and that thing felt instantly familiar, unlike ZX Spectrum, Apple II or Commodore 64, which feel nothing like what we have today. Is it possible that I had it wrong? When I look at the first Macintosh, it feels very much like the user interface we use today, but Macintosh was a technological demonstration that didn’t actually do anything useful yet, because hardware was too weak. But where did the Macintosh come from? Lisa, of course. And Lisa was the attempt to make Xerox Alto streamlined and commercially viable. All three were failures; the idea was good, but the technology wasn’t there yet. The first computers that feel exactly like what we are now using were the graphical workstations from Silicon Graphics and Sun, because they were basically minicomputers with a graphical console and a 3d rendering engine.

It’s almost as if home computers were a parallel branch of technology, related more to Atari arcade machines than the minicomputers and mainframes of the day, created as attempts to work with inferior but cheap technology, which evolved from Altair to Apple II to IBM PC, which evolved from 8088 to 80286 to 80386, when Microsoft copied the Macintosh interface and made it into a mass market OS, as technology became viable, then Windows evolved from 3.00 to 95 to 98… and then this entire technological blind alley went extinct, because the technology became advanced enough to erase the difference between the UNIX graphical workstations and personal computers, and so Microsoft started running a mainframe kernel on a PC, which was called NT, at version 4 it became a viable competition to Windows 95, and Windows 2000 ran NT kernel, and the 95/98/ME kernel was retired completely, ending the playground phase of PC technology and making everything a graphical workstation. Parallel to that, Steve Jobs, exiled from Apple, was tinkering with his NEXT graphical workstation project, which became quite good but didn’t sell, and when Apple begged him to come back and save them from themselves, he brought the NextStep OS and that became the OS X on the new generation of Macintosh computers. So, basically, the PC architecture was in its infancy phase and playing with cheap but inferior hardware until the prices of hardware came down so much that the stuff that used to be reserved for the high-cost graphical workstations became inexpensive enough that the graphical workstations stopped being a niche thing, went into main stream, and drove the personal computers as they used to be into extinction.

Just think about it – today’s computer has a 2D/3D graphical accelerator, integrated on the CPU or dedicated, it runs UNIX (Mac OS and Linux) or something very similar, derived from the mainframe NT kernel (Windows), it’s a multi-user, seamlessly multitasking system, but it all runs on hardware that’s been so integrated it fits in a phone.

So, the actual evolution of personal computers goes from an IBM mainframe to a DEC minicomputer to a UNIX graphical workstation to Windows NT 4 and Mac OS X, to iPhone and Android.

The home computer evolution goes from Altair 8800 to Apple I and II to IBM PC, then from MS DOS to Windows 3.0, 95, 98, ME… and goes extinct. The attempt to make a personal computer with graphical user interface goes from Xerox Alto to Apple Lisa to Macintosh, then to Macintosh 2, OS being upgraded to version 9… and going extinct, being replaced by a successor to NEXT repackaged as the new generation of Macintosh, with the OS that was built around UNIX. Then at some point the tech got so miniaturised that we now have phones running UNIX, which is a mainframe/minicomputer OS, directly descended from the graphical workstations.

Which is why you could take a SGI Indigo2 workstation today and recognise it as a normal computer, slow but functional, and you would take the first IBM PC or Apple II and it would feel like absolutely nothing you are accustomed to. That’s because your PC isn’t descended from IBM PC, it’s descended from a mainframe that mimicked the general look of a PC and learned to be backwards compatible with one.